Ep. 31: Are We Addicted to AI?

In this episode I dig into whether we’re addicted to AI by examining my own switch from ChatGPT to Claude and what usage limits reveal about dependency. I break down the business models behind OpenAI’s “unlimited” usage versus Anthropic’s enforced limits, explore the Uber playbook of subsidized addiction, and share why both companies’ controversial partnerships (OpenAI with Trump/ICE, Anthropic with Palantir) complicate the choice of which AI to use.

Out of the Pan and Into the Future Fire

So, I’m using Claude now. I’m trying to make the switch but, and this is somewhat tangential to the main topic today, it really does feel like out of the pan and into the future fire.

I said this in last week’s episode and I still feel that way, and this time I’m giving you more than just vibes.

Let’s talk about OpenAI first, because they’re objectively and openly the worst.

- Cofounder Greg Brockman donated $25 million to Trump back in September of 2025

- They’re pushing back against AI legislation

- Their software is being used for ICE application screenings.

Now let’s talk about Anthropic. No major Trump donations, which is good…but they’re in bed big time with Palantir. Anthropic actually partnered with Palantir back in 2024, which means Palantir’s government and enterprise clients can now deploy Claude models within Palantir’s platforms.

So what’s Palantir? It’s a data analytics company that builds surveillance and intelligence software primarily for government agencies, military, and law enforcement.

And why is this partnership so controversial? Let me count the ways:

- Palantir provided software to ICE that’s been used in deportation operations, including tracking and arresting undocumented immigrants

- They work extensively with the Department of Defense, intelligence agencies, and foreign militaries on targeting and surveillance systems

- Their software has been used by law enforcement for predictive policing programs that have been criticized for amplifying racial bias (this is objectively horrendous)

- The core product connects disparate data sources to enable mass surveillance capabilities that weren’t previously possible

- They operate with minimal public accountability about how their tools are actually being used by government clients.

So here I am, leaving ChatGPT to go to Anthropic…out of the pan and into the present fire.

What am I going to do? I honestly don’t know. I’m actually taking this as a sign to change the podcast name because if OpenAI is anything like CrossFit, they’re gonna come for me at some point for using the name ChatGPT.

But as it relates to the bigger question of “what am I gonna do”, that’s a good segue into what I actually want to discuss today: Are we addicted to AI?

Yes. The Answer is Yes.

I’ve been using Claude exclusively for like two weeks now and there’s this super interesting feature that I basically want to talk about exclusively today: Usage limits.

Here’s the thing about ChatGPT’s paid tier: you can basically use it as much as you want. Twenty bucks a month gives you pretty much unlimited usage…and this should be a red flag to all of us.

HOW THE FUCK ARE THEY PAYING FOR THIS?!

The answer is simple: they’re not. Investors are. OpenAI is projected to LOSE $14 billion dollars in 2026. Why would they do this? So they can get you addicted, lock you in, and recoup their losses down the road.

This is straight out of the Uber playbook. Uber took 14 years to become profitable. They were founded in 2009 and didn’t see their first annual profit until 2023. In 2022 alone, they lost $9.14 billion dollars. They spent billions in investor cash subsidizing rides below cost. In some cities they were paying drivers $17.50 per hour while only charging customers $9.10 per hour.

So how did they eventually turn a profit? By cutting driver subsidies, raising commission rates from 20% to 25-35%, improving operational efficiency, diversifying into Uber Eats and Freight, and raising prices across the board. And here’s the kicker: people kept taking Uber despite the price increases…because they were hooked.

I’m not a betting Maestro, but I’d say OpenAI is fixin’ to do the same thing.

Anthropic’s Usage Limits

The reason I want to focus on Anthropic’s usage limits is simple: They actually have them and they enforce them.

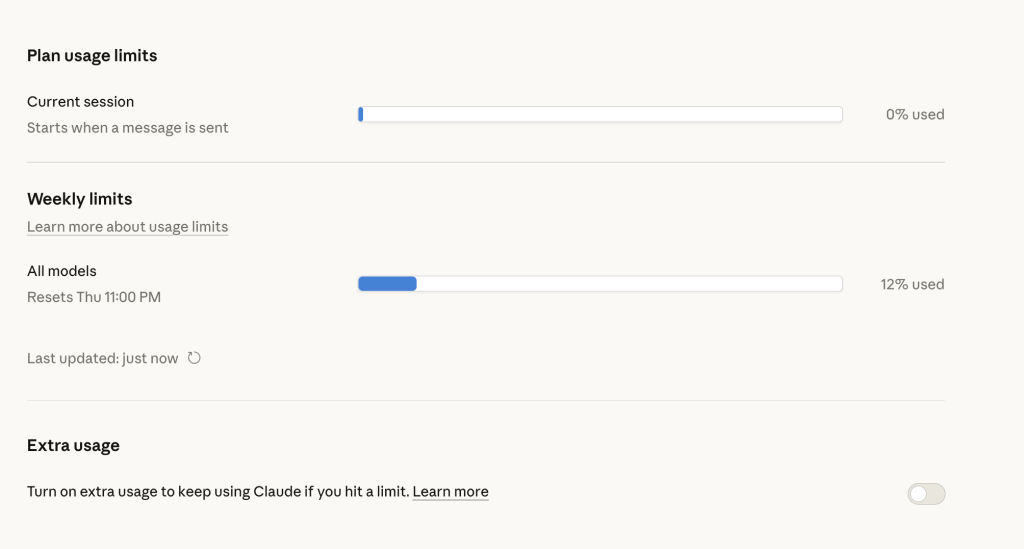

To see your plan’s usage limits and how much you’ve consumed, on desktop, go to account → settings → usage. There’s both a current session limit AND a weekly limit.

The more expensive models use up your allotment faster. And yes, you can pay for more if you want. You can upgrade to the Max plan which starts at $100 a month, or you can enable consumption-based pricing so that when you hit your limit, you just keep going at standard API rates without interruption.

As the image above demonstrates, you can literally watch your allotment get eaten up in real time via that little progress bar interface, and I’m not actually mad about this ( though it would be nice to have that bar on the main page instead of hidden inside settings).

These visually-represented usage limits have prompted three big thoughts for me:

- Are we addicted to AI?

- It feels like Anthropic is positioning themselves well from a financial perspective. They’re actually charging people appropriately to use this thing and creating real limits.

- I wonder when OpenAI is going to raise their prices. They have to eventually. Throttling usage? Introducing new tiers? I dunno. But to quote the great Sam Cooke, “A change is gonna come…”.

Clearly I really enjoy the business and finance side of this stuff, but to bring it back to the original question of this episode, are we addicted to AI?, I think putting usage limits on something is the easiest way to find out.

Saying “you can’t use this” is incredibly eye-opening.

The Addiction Is Real

For me personally, I really love using AI for coding and learning to code, and I really love using it as a thought partner that I can just word vomit to. It can keep up with me. It never asks me to repeat myself. And…it “knows” me (which became really apparent when I switched to Claude and suddenly it didn’t know me the way ChatGPT did).

The addiction is real.

Like, I’m out here with a whole ass podcast trying to figure out which is the least shitty of two shitty companies, when the actual answer is probably to use neither of them, to use none of it, and to just walk away from LLMs altogether.

But I’m human.

To that end, maybe Anthropic’s usage limits are actually one of the best second steps we can take when facing this addiction (awareness being the first step). Because when you have to pay, you pay attention. You’re not just using it all willy nilly, asking it any and every question that comes to mind and having zero regard for how many tokens you’re using.

This is why I’ll stand 10 toes down on the fact that OpenAI is 100% trying to create that addiction so they can create that lock-in and make money off of it in the future. With Claude, you see that blue usage bar filling up and it makes you think twice. With ChatGPT, the only thing slowing you down is your own mortality and the fact that you need to sleep.

A Question For Another Day

I think we’ll probably save this for another episode, but here’s a fun question to sit with: “How much should it actually cost per month to use LLMs?”

These things are out here acting as thought partners, CMOs, CFOs, designers, coders, strategists, therapists…for twenty dollars a month. Like…c’mon now.

I personally would be willing to pay $100 a month, especially if I knew that money wasn’t going to the worst people in the world or funding partnerships with horrendous organizations. I’m all for paying for what we use. Compute isn’t free.

But here’s the flip side, and this is something I talked about in my 2022 video when ChatGPT first debuted: I don’t want this to be a tool that is only for folks who can afford it.

Generative AI is a truly remarkable technology that democratizes so much. Yeah, I get that an ad-supported tier is one way to make it accessible, but I also know that these tech companies aren’t rolling out ad-supported tiers because they genuinely care about accessibility. Be for real.

Now, open source models, local models, smaller models, these are all real things and I believe them to be viable solutions down the road. I’m honestly not knowledgeable enough about them YET to speak to them as actual solutions, but Imma still throw them out there so this episode isn’t all doom and gloom.

How I Used Claude This Week

Each episode I share a quick example of how I used whatever LLM I used that week.

This week I used Claude to code a sales page for a new offer I’m beta testing. You can check out episode 20 for the full rundown of how I approach sales pages creation using an LLM.

I will say that the process was not as smooth as it was with ChatGPT, and I nearly maxed out my session allotment. But you know, growing pains. I anticipate it’ll get faster and better.

But honestly…who knows what LLM I’m actually gonna stick with at this point. Ay.

Da Wrap-up

This episode was more questions than answers, but hopefully you didn’t mind having a mirror held up and a suggestion for a moment of self-reflection. No stones were thrown. No glass houses were busted. We’re in this together, and the first step is awareness.

As always, endlessly appreciative for you and your curiosity.

Catch you next Thursday.

Maestro out.